Computing power and sophistication have risen remarkably in the past decade, to the point where the phone in your pocket may have as much computational horsepower as a laptop that’s just a few years old. But one thing that’s been resistant to annual breakthroughs is battery technology. Portable computing remains limited by how much power is available.

One way engineers have stretched battery lives has been to make both processors and code more efficient. The less silicon that needs to be fed or the complex the computation, the longer a device can run. We’ve gotten pretty good at optimizing today’s software and devices, but eventually we’ll hit a wall there, too. Which is why researchers are looking into approximate computing.

In approximate computing, software tells the chip which calculations can be “good enough,” or have more error in the results than others. Then either the chip or the software can use an approximate method to complete the task. For something like handwriting recognition, which is imprecise in the first place, permitting a certain amount of error can cut processing time—and power—without affecting the accuracy of the end result.

Tom Simonite, reporting for Technology Review:

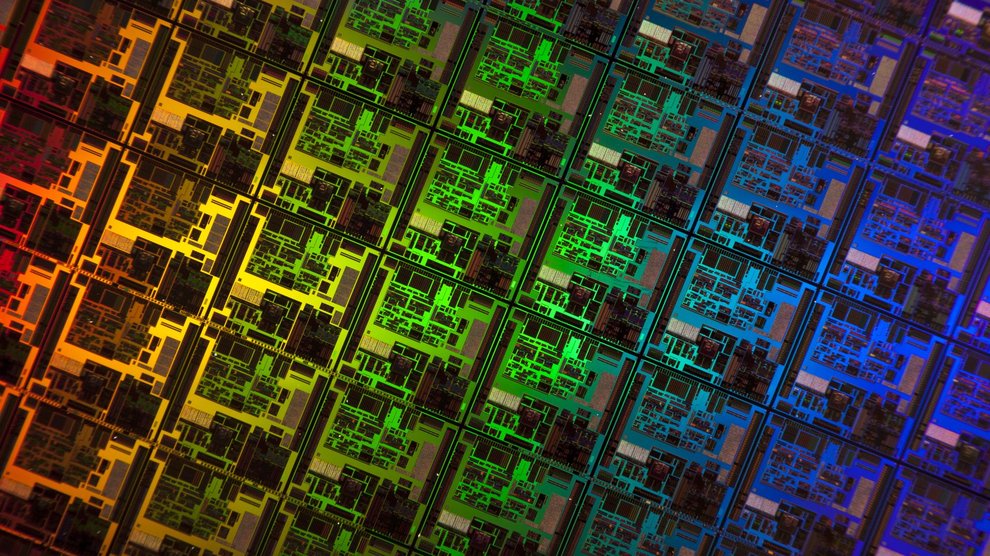

Allowing computers to approximate can save energy in a variety of ways, mostly by removing quality controls on the manipulation of electronic signals. Purdue’s processor design, dubbed Quora, saves energy by scaling back the precision used to express certain values it operates on, which allows some of its circuit elements to remain idle. It also dials down the voltage to some circuit elements when they work on approximated data. Crucially, the design doesn’t do that for every instruction a piece of software directs it to carry out. Instead, it looks for signals written into a program’s code indicating which parts of it are tolerant to some error and by how much.

There still some debate about what’s the best way to accomplish approximate computing. Some argue that it’s best done in a chip, like Quora, while others say software is the better option. Regardless, most agree that some amount of error will be commonplace in computing devices in the near future.