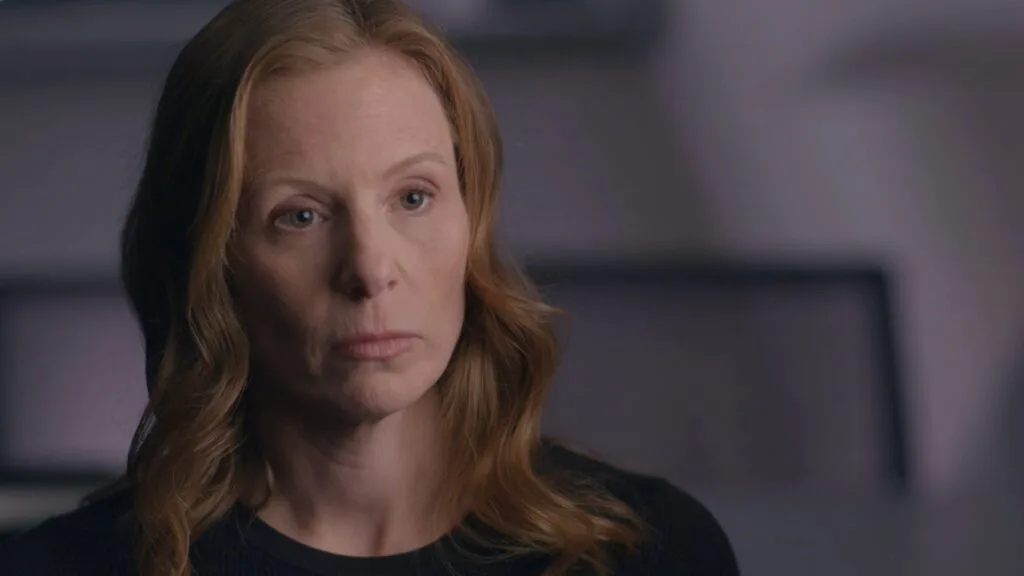

Facebook Executive Monika Bickert: “We’ve Been Too Slow” to Respond

November 21, 2018

Share

Monika Bickert, Facebook’s head of global policy management, acknowledged the company had not done enough to curb content that incited violence in global hotspots, including a looming genocide in Myanmar, despite clear warning signs.

“We have policies that are designed to keep our community safe, but it’s also important for us to enforce these policies, to find bad content quickly, to find violating content quickly and to remove it from the site,” Bickert told FRONTLINE in an interview for The Facebook Dilemma. “And we’ve been too slow to do that in some situations.”

The social network played a significant role in stoking atrocities in Myanmar by allowing hate speech and misinformation against the Rohingya, a persecuted minority group, to spread across the country, a team of United Nations investigators found in September. Their report, which called for the Myanmar army top brass to be prosecuted for genocide, labeled Facebook’s response as “slow and ineffective.”

As FRONTLINE has reported, Facebook representatives were warned as early as 2015 about the potential for a dangerous situation in the nascent democracy.

In November, Facebook executive Alex Warokfa admitted in a blog post that the company did not do enough to prevent the platform “from being used to foment division and incite offline violence” in the Southeast Asian county. The post accompanied a 60-page report, commissioned by Facebook after the broadcast of The Facebook Dilemma, looking into the internet giant’s role in inciting violence against the Rohingya.

Thousands of Rohingya continue to flee to neighboring Bangladesh in what a top U.N. expert called an “ongoing genocide” at a press conference in October.

The explosive growth of Facebook and its increasing involvement in spreading false news and hatred has raised pressing questions as to whom Facebook is actually accountable. Bickert, a former federal prosecutor who also worked on child sex trafficking and counterterrorism in Asia, said that Facebook’s 2.2 billion users hold the company to account.

“If it’s not a safe place for them to come and communicate, they are not going to use it,” she said. “That’s something that we know, and it’s core to our business as well as being the right thing to do for us to make sure that people are safe.”

Myanmar is not the only country where Facebook has been weaponized. The platform was also used to spread misinformation in the Philippines, Egypt and Ukraine, FRONTLINE’s reporting for The Facebook Dilemma found.

More than 85 percent of people on Facebook are outside of North America, according to Bickert. A major challenge for the company is to sift through the millions of reports every week and flagged content in dozens of languages, and come up with guidelines on what’s acceptable.

“That means our guidance has to be very objective. It has to be fairly black and white if you see this is how we define an attack; this is how we define bullying,” Bickert said. “And it means that the lines are often more blunt than we would like them to be.”

She said her team is deepening relationships with community organizations and language specialists around the world in order to more quickly identify and remove toxic content before it translates into violence in the physical world. There are bi-weekly meetings with lawyers, content reviewers, communications specialists, and others to discuss whether Facebook needs to update its policies. Bickert said the team has made significant progress.

“But where we are now, in terms of using technology to flag potential violations and hiring people who can effectively tell us what are the trends, what do we need to be looking for, how do we determine what is hate speech in Germany or Afghanistan and the language specialists to review that content and remove it, we are far beyond where I thought we would be at this point,” she said. “We still have a lot of work to do.”

Related Documentaries

Latest Documentaries

Related Stories

Related Stories

Explore

Policies

Teacher Center

Funding for FRONTLINE is provided through the support of PBS viewers and by the Corporation for Public Broadcasting, with major support from Ford Foundation, and The Fialkow Family Foundation. Additional funding is provided the Abrams Foundation, Park Foundation, John D. and Catherine T. MacArthur Foundation, Heising-Simons Foundation, and the FRONTLINE Trust, with major support from Jon and Jo Ann Hagler on behalf of the Jon L. Hagler Foundation, and Corey David Sauer, and additional support from Koo and Patricia Yuen. FRONTLINE is a registered trademark of WGBH Educational Foundation. Web Site Copyright ©1995-2025 WGBH Educational Foundation. PBS is a 501(c)(3) not-for-profit organization.